47 Questions to Ask When Choosing the Best AI Hiring Tool in 2026

Key Takeaways (TL;DR)

- Ask the right questions upfront: 70% of AI implementation failures stem from human factors, not technology—focus on strategic alignment, team readiness, and clear ROI metrics before evaluating features.

- Prioritize fairness and compliance: With EU AI Act fines reaching €35M and new US state regulations in 2025-2026, ensure vendors conduct regular bias testing and maintain SOC 2/ISO 27001 certifications.

- Demand transparency in AI decision-making: Require explainable scoring, human-in-the-loop controls, and detailed bias testing protocols—if hiring managers can't explain the rubric, don't deploy it.

- Calculate total cost of ownership accurately: Budget 50-75% above vendor quotes for hidden costs like integration, training, and overages—good AI tools deliver 3-10× ROI in year one.

- Validate vendor claims with industry references: Request client contacts facing similar hiring volumes and compliance requirements—retention rates and failure recovery processes reveal true vendor credibility.

The most successful AI hiring implementations combine technical excellence with ethical responsibility, ensuring tools enhance rather than replace human judgment while delivering measurable improvements in hiring speed, quality, and fairness.

AI is reshaping how companies find, assess, and hire talent [76]. AI hiring software is no longer optional [76]. Organizations burn budgets on stalled implementations because buyers ask the wrong questions [76]. The right AI hiring technology unlocks faster hiring, more equitable processes, and dramatically better talent outcomes [76]. This guide presents 47 essential questions across strategic, technical, ethical, and operational dimensions to help talent leaders make informed decisions when evaluating the best AI tool for hiring in 2026.

Strategic Alignment and Business Case

Image Source: MokaHR

Strategic Alignment and Business Case

What business problem does this solve?

AI hiring tools address specific, measurable challenges that traditional recruitment cannot solve at scale. Time-to-hire, cost-per-hire, screening bias, candidate quality, and recruitment capacity represent the core problems most organizations face.

The business case is clear. Executives implementing AI-powered recruitment strategies report 35% more overall profitability relative to their competition [77]. Organizations can reduce expenses by as much as 30% per selection [77]. Candidates selected through AI-driven processes are 14% more likely to succeed in interviews [77].

Quantifying your current pain points creates the foundation for vendor evaluation. Organizations spending above industry benchmarks or experiencing extended time-to-fill periods lose productivity daily. AI automation of resume screening and scheduling eliminates these bottlenecks immediately.

How does it align with our hiring strategy?

AI shifts recruitment from reactive application processing to proactive talent identification. Predictive capabilities surface high-potential candidates before they apply. Data-driven insights enable recruiters to identify and connect with best-fit candidates rather than waiting for applications.

Clear objectives drive successful alignment. Reducing time-to-hire, enhancing candidate experience, minimizing bias, or predicting talent needs each require different AI capabilities. Organizations must determine where AI adds value: automating repetitive tasks, improving candidate sourcing, or enhancing interview processes.

Strategic alignment means choosing tools that support your specific recruitment model, not adopting AI for the sake of modernization.

What stakeholders need to be involved?

AI recruitment projects require input from multiple stakeholders, each bringing essential perspectives. Internal stakeholders include HR professionals who directly influence hiring processes. External stakeholders encompass regulators and compliance experts who ensure legal alignment.

Workers' representatives serve as the link between employees and employers, ensuring AI adoption meets both interests [77]. Passive stakeholders—individuals affected by AI systems but powerless to influence the project—require representation in planning stages [77].

Engaging developers, operators, and representatives of passive stakeholders achieves ethical and sustainable development [77]. This broad stakeholder involvement prevents costly oversights and ensures adoption across the organization.

Understanding the AI Foundation

Image Source: SplitMetrics

What machine learning models are used?

Modern AI hiring tools operate on three distinct approaches, each solving different recruitment challenges. Supervised learning trains on historical hiring data with known outcomes—employee performance, retention rates, or promotion success [77]. The algorithm identifies patterns that separate strong hires from poor fits, building predictive models based on actual results rather than assumptions.

Unsupervised learning discovers hidden candidate segments without pre-labeled outcomes [76]. This approach reveals talent pools that traditional screening might overlook. Reinforcement learning improves through trial and error, adjusting recommendations based on hiring success or failure [76]. Each placement provides feedback that refines future candidate matching.

Deep learning processes vast datasets through multi-layered neural networks that mimic human decision-making patterns [76]. These systems connect disparate information—work history, skills assessments, interview responses—to form candidate profiles [76]. The networks continuously adjust their weightings based on prediction accuracy, becoming more precise over time [76].

Is it predictive, prescriptive, or generative AI?

Predictive AI forecasts candidate success based on historical patterns and current data [76]. These models estimate which applicants will perform well, stay longer, or advance within the organization [76]. Predictive systems excel at ranking candidates by likelihood of success.

Prescriptive AI goes beyond predictions to recommend specific actions [77]. Instead of simply identifying top candidates, prescriptive models suggest interview questions, assessment types, or sourcing strategies to improve outcomes [76]. This approach guides recruiters toward better decisions rather than just providing rankings.

Generative AI creates original content by learning from existing patterns [76]. In hiring contexts, generative models draft job descriptions, personalize candidate communications, or create interview questions tailored to specific roles [77]. The content reflects training patterns while addressing unique requirements.

What scientific methods underpin the technology?

Statistical analysis forms the foundation, enabling systems to process large datasets and identify meaningful correlations over time [77]. Advanced algorithms distinguish between causation and correlation, focusing on factors that actually drive hiring success rather than superficial patterns.

Neural network architectures mirror human cognitive processes, creating layered analysis that considers multiple variables simultaneously [77]. These systems evaluate candidates holistically rather than relying on single metrics or keyword matching.

Training Data and Data Sources

Image Source: AIHR

What data is used to train the models?

AI recruiting tools build their understanding from multiple data streams. Candidate-submitted materials form the foundation: resumes, applications, assessment responses, and interview transcripts provide direct evidence of qualifications and competencies [77]. Historical ATS and CRM data creates the performance baseline through past applicant journeys, stage progression records, recruiter evaluations, and critical post-hire outcomes including performance ratings and retention periods [77].

Public professional profiles from LinkedIn, GitHub repositories, and portfolio sites validate claimed skills against demonstrated work [77]. Behavioral signals complete the picture. Response times to outreach, assessment completion rates, and communication channel preferences help predict candidate engagement levels and hiring intent [77].

Is it first-party or third-party data?

First-party data comes directly from your organization's interactions with candidates. Website visits, CRM touchpoints, email engagement, and application submissions create owned data streams where candidates knowingly share information [77]. You control collection, processing, and retention policies [77]. This direct relationship produces the highest accuracy because it captures actual behavior from known individuals rather than inferred patterns [77].

Third-party data aggregates information from external sources through companies with no direct candidate relationship [77]. Data brokers compile web activity, social media signals, and professional network information for resale to hiring organizations [77]. Third-party sources trade precision for breadth, offering wider reach through modeled and inferred information rather than directly observed candidate behavior [77].

How often is training data updated?

Model retraining frequency depends on pattern stability in what the system predicts [77]. Hiring patterns for established roles in stable industries may remain reliable for years without requiring fresh training data [77]. Market disruptions, regulatory changes, or significant shifts in candidate behavior trigger the need for updated training examples to maintain prediction accuracy [77].

Accuracy, Validity, and Performance

Image Source: Assess Candidates

What is the tool's predictive accuracy rate?

Performance metrics determine whether an AI hiring tool delivers real business value or becomes expensive shelf-ware. Organizations implementing AI-driven recruitment see a 30% increase in employee satisfaction and 25% reduction in turnover within the first year [76]. Retention rates improve by up to 20% when automated systems replace manual screening [76].

The data is clear. AI tools outperform humans in applicant screening by at least 25% [76]. L'Oreal cut resume review time from 40 minutes to 4 minutes using AI screening [76]. Data-driven hiring processes produce a 78% improvement in decision-making and 24% increase in employee retention [76].

Neural networks and regression analysis identify specific traits and experiences that correlate with role success [76]. This precision translates directly to reduced mis-hires and faster placements.

Are there peer-reviewed validation studies?

A late-2023 IBM survey of over 8,500 global IT professionals showed 42% of companies were using AI screening, with another 40% considering integration [76]. Adoption is accelerating, but validation studies remain limited.

Many experts question whether tools actually identify the most qualified applicants [76]. This uncertainty creates risk for organizations investing in unproven systems. Demand peer-reviewed studies that demonstrate predictive validity for your specific role types and hiring context.

How does accuracy vary across different job types?

Accuracy depends heavily on role complexity and available training data. AI systems build models by analyzing recruitment patterns and matching user needs with company profiles [76]. Simple, high-volume roles with clear success metrics show higher accuracy than complex, senior positions with nuanced requirements.

Algorithm fairness and impartiality significantly affect performance across different positions [76]. Roles with strong historical data and clear success indicators enable more accurate predictions than positions with limited examples or subjective evaluation criteria.

Technical Integration Requirements

Image Source: Easy Hire Tool

What technical prerequisites are needed?

Modern recruiting tech stacks require more than standalone tools. An effective AI hiring platform connects seamlessly with your ATS, candidate engagement systems, interview scheduling tools, and analytics dashboards [77]. The best implementations feature tight integration rather than disconnected point solutions that create data silos [77].

Organizations should prioritize tools with robust API capabilities that enable real-time data synchronization between systems [77]. An API-first approach delivers three critical advantages: instant data flow between ATS, CRM, and AI tools; custom workflows that match your specific recruitment processes; and flexibility to add or replace components without disrupting operations [77].

Evaluating what is the best AI hiring tool means assessing integration depth, recruiter usability, regulatory compliance, and proven ROI [77].

How does it connect to our tech stack?

Even comprehensive platforms require connections to HRIS systems, background check providers, assessment tools, and specialized solutions [76]. Native integrations consistently outperform third-party connectors in reliability and performance [76]. Poor integrations create data sync failures, force manual workarounds, and eliminate the efficiency gains AI promises [76].

Workflow continuity determines integration success. Candidates should progress from sourcing to application to offer without data loss or manual transfers [76]. Information must flow bidirectionally between systems to maintain accuracy across your entire tech stack [76].

Are there workflow automation capabilities?

Workflow automation reduces manual effort through predefined rules and sequences [77]. Effective automation enables recruiters to focus on relationship-building rather than administrative tasks. It reduces errors through consistent execution and improves team collaboration through centralized communication [77].

The best AI hiring tools automate candidate progression, interview scheduling, follow-up communications, and status updates without losing the human touch that candidates expect.

Fairness Testing and Methodology

Image Source: Warden AI

What bias tests are conducted and how often?

Bias testing frequency determines whether AI hiring tools maintain fairness over time. Organizations using adversarial debiasing with diverse datasets and fairness constraints achieve a 30% increase in hiring diversity and a 40% reduction in bias detection [77].

Counterfactual testing compares how candidates with identical qualifications score when only demographic identifiers change [77]. If two resumes differ only by name—"Michael Johnson" versus "Jamal Washington"—but receive different scores, the system shows bias. Consistency testing verifies that candidates with similar qualifications receive uniform treatment regardless of background [77].

Testing schedules matter. Monthly checks catch drift early. Quarterly reviews identify broader patterns. Annual audits satisfy compliance requirements but miss real-time problems.

Can you explain proportional parity and fairness tests?

Statistical parity requires proportional outcomes across demographic groups [76]. When 10% of male applicants advance to interviews, 10% of female applicants should also advance [76].

The Four-Fifths Rule provides a practical benchmark for identifying bias [77]. Calculate the selection rate for each group, then compare the lowest rate to the highest. A trucking company hiring 6.6% of male applicants and 5.5% of female applicants passes this test: 5.5 ÷ 6.6 = 83.3%, which exceeds the 80% threshold [77].

This rule catches obvious disparities but misses subtle patterns. Advanced testing examines multiple protected classes simultaneously and tracks performance across different job types.

How is data drift monitored?

Data drift occurs when the model receives different inputs than during training, causing performance decline [76]. Fairness drift presents a more serious problem—protected-class outcomes diverge gradually, even when systems initially passed bias tests [77].

Monitor whether outcomes for protected classes shift over time [77]. A system might start fair but develop bias as hiring patterns change. Distance metrics quantify how far current data distributions have moved from baseline measurements [76].

Continuous monitoring beats periodic audits. Automated alerts flag when fairness metrics drop below acceptable thresholds. Manual reviews investigate root causes and implement corrections before problems compound.

Protected Attributes and Proxy Variables

Image Source: HackerEarth

Does the tool use or avoid protected characteristics?

California regulations hold employers liable for discriminatory hiring decisions regardless of whether AI tools generate those decisions [76]. Simply removing names from resumes is insufficient. Models identify features that correlate with protected attributes and use them as substitutes.

Amazon discovered this the hard way. The company shut down its internal resume screener after the system penalized the word "women's" by learning from historical hiring patterns the company wanted to change [76]. The tool had absorbed decades of male-dominated hiring decisions and replicated that bias automatically.

How are proxy variables identified and eliminated?

Proxy variables hide in plain sight. ZIP codes correlate with race. Graduation years correlate with age. Specific extracurricular activities correlate with socioeconomic background [77]. One resume screening tool flagged being named Jared and playing high school lacrosse as indicators of successful employees, despite neither having job relevance [76] [77].

Voice-based AI trained on narrow accent sets produces systematically lower scores for multilingual candidates [77]. The bias appears neutral but creates discriminatory outcomes through seemingly objective technical choices.

Paired testing exposes proxy discrimination effectively. Submit identical candidate profiles that differ only on demographic signals like name, school, ZIP code, or graduation year [77]. When scores diverge meaningfully, the model uses those signals as proxies for protected characteristics.

What safeguards prevent discriminatory outcomes?

Explainable scoring allows recruiters to audit whether AI relies on proxy variables rather than job-related factors [78]. If the system cannot explain why a candidate scored higher or lower in terms that relate to role requirements, it should not be deployed.

Human-in-the-loop design routes borderline candidates to recruiters while automating only clear matches [78]. This approach maintains efficiency while preserving human oversight where AI confidence is lower.

Continuous monitoring proves essential because outcomes matter more than intent under disparate impact standards [80]. Regular third-party audits and demographic parity tracking identify statistical anomalies before they compound into legal liability [78]. Distance metrics quantify how far apart outcomes are across protected groups, providing early warning signals when systems drift toward discrimination.

Regulatory Compliance Framework

Image Source: Warden AI

Regulatory Compliance Framework

What compliance certifications do you hold?

Compliance certifications separate serious vendors from those treating data protection as an afterthought. SOC 2 and ISO 27001 certifications provide third-party validation that vendors actually implement the security controls they claim [13]. GDPR compliance is mandatory, not optional, for any vendor processing European candidate data [13].

Platforms like MokaHR demonstrate proper implementation by combining privacy-by-design architecture with verified SOC 2 and ISO 27001 certifications [13]. SAP SuccessFactors goes further, supporting GDPR, EEO/OFCCP, and country-specific regulations through configurable workflows and comprehensive audit trails [13].

How do you handle international hiring regulations?

Regulatory authorities in both the U.S. and EU now classify recruitment AI as high-risk technology [2]. The compliance landscape has shifted dramatically with new regulations taking effect across multiple jurisdictions.

The EU AI Act came into force August 1, 2024, imposing fines up to €35 million or 7% of annual global turnover for non-compliance [2]. California regulations effective October 1, 2025 explicitly prohibit employers from using AI systems that harm applicants based on protected characteristics [2]. Illinois amendments take effect January 1, 2026, barring AI that subjects employees to discrimination [2]. Colorado's AI Act becomes effective February 1, 2026, requiring employers with more than 50 employees to conduct annual impact assessments [2].

These aren't distant requirements. They are current legal obligations that create real financial and legal exposure.

Are there compliance monitoring features built-in?

Impact assessments are now required before incorporating AI tools into hiring processes [2]. Organizations must conduct both Privacy Impact Assessments and AI Impact Assessments together, not separately [2]. Human intervention serves as a required safeguard under multiple regulatory frameworks [2].

Built-in compliance monitoring eliminates the manual overhead of tracking these requirements across different jurisdictions and regulatory frameworks.

Data Privacy and Security Measures

Image Source: LeewayHertz

What encryption standards are used?

Candidate data demands enterprise-grade protection. Transport encryption using TLS 1.2 or higher protects data in motion, while AES-256 encryption secures data at rest [14]. Strong key management with customer-managed or segregated keys prevents unauthorized access [14]. Multi-factor authentication and role-based access control add essential layers of protection [15].

Platforms like Grayscale operate on secure, region-specific AWS environments with role-based access, audit logs, and customizable permissions built into the system architecture [16]. Single-tenant deployment with customer-controlled encryption keeps each customer's data isolated, supporting data sovereignty requirements for regulated enterprises [17]. This matters because hiring teams handle sensitive personal information daily.

How is PII redacted and protected?

The numbers tell the story. Nearly 46% of data breaches involved customer Personally Identifiable Information in 2025, with the average cost per PII record reaching USD 169 [18]. Pattern-based redaction fails when stakes are high.

Data tokenization replaces sensitive values with non-reversible tokens before they reach the AI model [17]. This provides stronger guarantees than pattern-based redaction by ensuring regulated data never leaves the customer's control boundary. PII redaction software uses pattern matching, machine learning, AI, and OCR to accurately identify and redact sensitive information [18].

What third-party security audits have been completed?

Independent validation matters more than vendor promises. SOC 2 Type II and ISO 27001 certifications evidence mature, audited security and privacy programs covering controls relevant to candidate data [14].

SOC 2 Type II evaluates control effectiveness over time, while ISO 27001 confirms an information security management system is designed, implemented, and continuously improved [14]. These certifications represent third-party validation that security practices meet industry standards, not just marketing claims.

Candidate-Facing Experience

What does the candidate journey look like?

Job seekers research employers through AI before visiting career sites. 37% use AI platforms to discover and research companies [19]. Candidates ask these platforms whether companies promote from within, what engineering culture looks like, or if an employer ranks among best remote workplaces.

AI removes friction through self-serve interview scheduling, timely reminders, and status updates that reduce no-shows [20]. Transparency about the hiring timeline builds trust, particularly for senior-level talent. Sharing the number of steps involved allows candidates to prepare mentally and logistically [21].

Offering choice between AI-driven or human-led paths respects individual communication preferences and keeps qualified talent engaged [21]. The best systems adapt to candidate preferences rather than forcing a one-size-fits-all approach.

Is the assessment accessible for diverse candidates?

Accessible processes enable disabled candidates to demonstrate skills fairly. AI can simplify instructions, rewrite complex requirements, and provide examples for clarity [22].

Organizations should detail accessibility features prominently, include information about requesting reasonable accommodations in every email, and evaluate adjustment requests case-by-case even without medical evidence [7]. Disability varies as widely as the global population, requiring constant accessibility refinement.

The strongest platforms build accessibility into their core design rather than treating it as an afterthought. This approach benefits all candidates, not just those with declared disabilities.

How does the tool impact employer brand?

AI amplifies whatever signal organizations already project. When messaging across career pages, job descriptions, and content remains generic, AI summaries appear generic [3].

Over 50% of candidates believe AI screens them, yet only 26% trust the process is fair [23]. This trust gap creates a significant employer brand risk. Companies creating specific, honest content win comparisons against competitors using vague claims.

Poor candidate experiences spread faster than positive ones. AI tools that create friction, confusion, or perceived unfairness damage employer brand reputation long after individual hiring cycles end.

Customization Depth and Control

How customizable are assessment criteria?

Scoring accuracy depends on control over evaluation criteria. Organizations must define required competencies and performance standards for each dimension before candidates submit responses [24]. Explicit rubrics describe what high-quality, adequate, and weak responses look like for every criterion. These rubrics require review and approval from hiring teams before scoring begins [24].

Dover uses custom prompts to rank candidates based on criteria organizations set for each role [25]. Workable allows teams to customize scoring weights based on role priorities [25]. CLARA adjusts criteria weights for each position, keeping hiring teams in control of candidate screening [26]. Vervoe offers fully customizable assessments with employer branding, personalization, and adjustable test questions [27].

Can we create job-specific scoring models?

AI can generate starting points by identifying relevant competencies and drafting rubric criteria from job descriptions. The standard remains owned by people responsible for the hire [24]. Manatal enables teams to customize scoring parameters and view detailed ranking explanations [25]. Eightfold provides candidate rankings with detailed explanations of why someone scored highly for specific roles [25].

Job-specific models matter because generic scoring criteria miss role nuances. Technical positions require different evaluation standards than sales roles. Customer service assessments need different rubrics than engineering challenges.

What level of control do we maintain over AI decisions?

Organizations maintain meaningful control when rubrics can be reviewed, modified, and approved before scoring begins [24]. If hiring managers cannot explain what a scoring rubric evaluates and why, it should not be deployed [24]. C-Factor AI uses a human-in-the-loop evaluation approach where AI assists with scoring while recruiters maintain final decision authority [28].

Control means more than just reviewing final scores. It means setting the criteria, approving the methodology, and maintaining override authority. The AI provides analysis and recommendations. Humans make the hiring decisions.

Multi-Location and Multi-Role Flexibility

Image Source: Phenom

Multi-Location and Multi-Role Flexibility

Does it support global hiring across regions?

Remote work fundamentally changed recruitment geography. Companies no longer compete for local talent pools when 73% expect more than half of their new hires to be international by 2026 [29].

Organizations ignoring language diversity sacrifice an estimated 7% in business opportunities annually due to artificially limited talent reach [29]. The math is stark: AI-powered systems access 100% of available talent rather than the 60% comfortable with English-only processes [4].

Geographic reach determines whether you compete in global talent markets or remain confined to shrinking local pools.

Can it handle different languages and cultures?

Modern AI recruiting platforms operate in dozens of languages without requiring dedicated language specialists [30]. These systems engage candidates around the clock in their native language through text, email, or WhatsApp [30].

The talent distribution tells the story. Spanish speakers represent 30-50% of available workers in many US markets for nursing, warehouse, and food service roles [4]. Vietnamese communities dominate manufacturing sectors in specific regions. Mandarin and Cantonese speakers form significant populations in West Coast hospitality [4].

AI evaluates all candidates using identical criteria regardless of interview language [31]. This consistency prevents the bias that creeps into human-led multilingual interviews.

How adaptable is it for various job families?

Location-specific requirements demand flexible language support. Miami facilities emphasize Spanish and Haitian Creole capabilities. California locations prioritize Vietnamese and Mandarin support. Texas operations focus primarily on Spanish [4].

The platform scales from two languages to ten, across one location or fifty [4]. Role complexity matters less than geographic distribution when determining language requirements.

Insights, Dashboards, and Decision Support

What real-time insights are available?

Most recruiting teams rely on broken reporting processes. Analytics adoption reaches 82% among recruiters working to improve hiring strategies [32]. Yet 79% still rely on spreadsheets to manage reporting [32]. This disconnect between intention and execution creates blind spots that cost placements.

The best AI hiring tools replace manual compilation with live dashboards that track:

Conversion rates at each stage

Pipeline velocity and bottlenecks

Candidate sentiment and engagement

Recruiter performance and capacity

MokaHR delivers centralized KPIs covering time-to-hire, pipeline health, source quality, and offer acceptance with AI-powered anomaly alerts that surface bottlenecks and recommend actions [33]. Platforms like JobTalk update metrics daily and flag when organizations will likely miss fill targets, providing time to adjust sourcing strategies before deadlines pass [8].

Can we identify bottlenecks and drop-off points?

Drop-off analysis reveals where hiring processes break down. Between 30-40% of candidates exit between interview rounds [34]. Average decision delays of 7-10 days after final interviews compound the problem [34]. Over 50% of offer rejections trace back to poor communication rather than compensation [34].

Stage-by-stage analytics identify where candidates disengage. Whether during application submission, interview scheduling, or post-interview silence periods, data shows exactly where your process loses talent.

How actionable is the reporting for hiring managers?

Reporting that only shows what happened wastes time. AI-driven recommendations transform descriptive metrics into prescriptive actions [32]. Systems analyze data patterns and provide specific steps for adjusting sourcing mix, refining screening processes, or reallocating budget to higher-performing channels [32].

Predictive analytics forecast hiring outcomes based on historical data and current pipeline velocity [8]. Organizations track cost-per-hire, source ROI, and budget utilization automatically rather than compiling reports manually [8]. The best tools tell you not just what happened, but what to do next.

Time-to-Value and Deployment Speed

Image Source: Phenom

How quickly can we go live?

Deployment speed reveals which vendors understand modern hiring urgency versus those trapped in outdated cycles. Traditional corporate hiring processes require 45 to 60 days for a single senior position [35]. AI deployments typically need 7 to 12 months to achieve meaningful impact [36]. Organizations conducting proper ATS planning achieve 40% faster shortlisting [37]. Pre-implementation analysis delivers 30-35% faster team onboarding and smoother adoption [37].

Speed matters because hiring windows close quickly. The best vendors offer phased activation rather than all-or-nothing launches.

What is the typical implementation timeline?

Effective rollouts follow predictable phases that balance speed with stability. Months 1-3 establish foundation capabilities including resume screening and basic sourcing [38]. Months 4-8 introduce assessment automation and scheduling tools [38]. Months 9-12 activate advanced features like predictive analytics and bias detection [38]. Optimization continues beyond year two as systems learn and improve [38].

Organizations attempting to activate everything simultaneously experience higher failure rates. Phased approaches allow teams to adapt gradually while maintaining hiring quality.

What are common implementation blockers?

Data integration creates the primary bottleneck, not AI complexity [36]. Organizations fail when they lack thorough tech stack assessments, clear implementation timelines with testing phases, and established KPIs before launch [39]. Firms monitoring metrics actively achieve 33% faster placement rates [37].

Successful deployments require detailed roadmaps covering training, process updates, and team communication [40]. KPIs defined for each hiring stage with error margins identify implementation delays before they compound [40].

The most common mistake is underestimating data preparation time. Clean, structured data feeds determine whether AI delivers value or confusion.

Ongoing Support and Partnership Model

Image Source: Salesforce

Ongoing Support and Partnership Model

What does customer success support look like?

Post-implementation support separates platforms that drive lasting value from expensive shelf-ware. The best AI hiring tools don't just solve tickets—they actively monitor your success metrics and suggest optimizations.

Proactive customer success teams conduct quarterly business reviews, identify underutilized features, and benchmark your performance against industry peers. These teams connect technical capabilities to business outcomes rather than simply troubleshooting issues. Does the vendor provide ongoing training programs? Do they deliver regular product updates aligned with regulatory changes? Can they offer strategic guidance as your hiring needs evolve?

The difference matters. Organizations with engaged customer success partners see 40% higher feature adoption and 60% better ROI within the first year.

Are there dedicated account managers?

Single points of contact prevent organizational knowledge loss and reduce response friction. Dedicated account managers understand your specific hiring context, remember past challenges, and anticipate future needs.

Shared account managers handling dozens of clients dilute attention and institutional memory. When evaluating vendors, confirm account manager assignment thresholds, escalation paths for urgent issues, and continuity plans when team members transition.

Ask directly: What's the client-to-account manager ratio? How do you handle knowledge transfer during team changes?

How responsive is technical support?

Response time expectations vary by issue severity. Critical failures blocking active hiring demand same-day resolution. Feature requests can tolerate longer timelines.

Support channels should include live chat, phone, email, and comprehensive self-service knowledge bases. Verify support availability hours, average first-response times, and resolution rates for common technical issues.

The best vendors provide multiple escalation paths and maintain dedicated technical resources for enterprise clients. Poor support creates bottlenecks that eliminate any efficiency gains from the AI platform itself.

Cost Structure and ROI Calculation

What is the per-hire or subscription cost?

Recruiting software pricing falls into four main buckets: per-user seats, per-job, per-candidate usage, and enterprise contracts [9]. AI recruiting software ranges from USD 15.00/user/month for budget ATS tools to USD 35,000.00+/month for enterprise platforms, with most growing teams spending USD 99.00–USD 599.00/month for capable mid-range AI interview or screening tools [5].

Organizations should budget an additional 50–75% on top of vendor quotes to cover costs that rarely appear in the initial pitch [5]. Hidden expenses including implementation fees, ATS integration costs, SSO/SAML add-ons, premium support tiers, and overages can add 30–100% to the headline subscription price [5].

How do we calculate expected ROI?

The formula for calculating ROI is straightforward: (Total Quantified Benefits − Total Costs) ÷ Total Costs [41]. A good ROI for recruiting software typically ranges from 3× to 10× in year one depending on volumes, role mix, agency baseline, and time-to-fill reductions [41]. Cost per screened applicant serves as the best apples-to-apples comparison across vendors [9].

What cost savings have other clients achieved?

Companies using AI in recruitment witness a 27% reduction in cost-per-hire [1]. AltHire AI documents 70% faster time-to-hire and 33+ recruiter hours saved per week [5]. At a median recruiter hourly wage of USD 35.05, that translates to USD 60,164.00 per recruiter annually [5].

Platforms report a 50% reduction in recruiting costs [5]. AI tools that reduce time-to-fill from 45 days to 25 days save over USD 6,000.00 per hire in vacancy costs alone [1]. Senseloaf reports average cost-per-hire reductions of 40% within the first six months [1].

Team Capability and Adoption Readiness

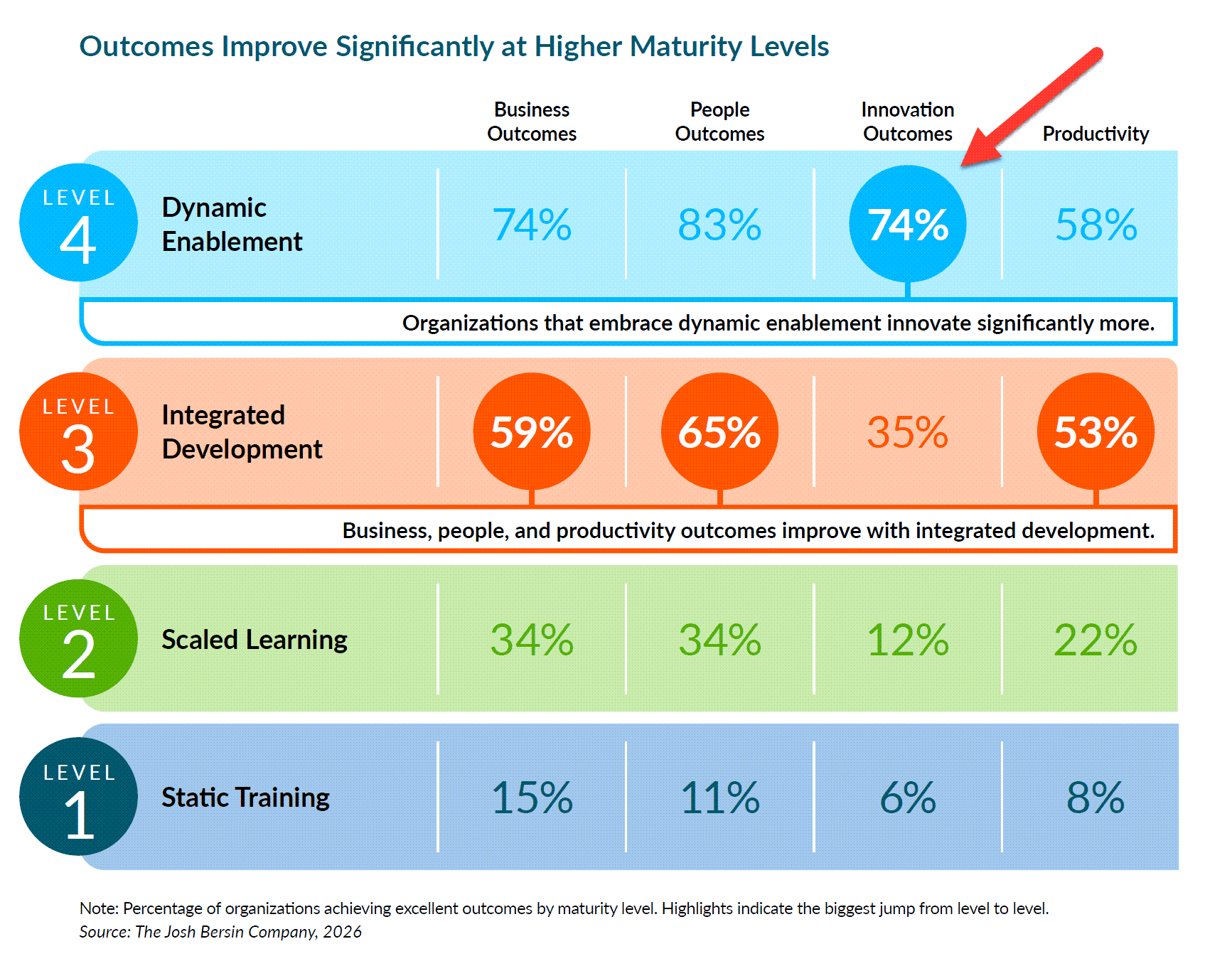

Image Source: Josh Bersin

What skills do our team members need?

Technology is rarely the problem. Roughly 70% of AI implementation challenges stem from human factors rather than technology itself [10]. Teams need four core competencies to succeed with AI hiring tools.

Awareness means understanding AI's capabilities and limitations, including bias risks and privacy implications [11]. Application involves using AI tools to draft content or analyze data while maintaining human oversight [11]. Adaptability requires staying curious as tools evolve [11]. Accountability means knowing when to question or override AI output [11].

These skills determine whether your team embraces or resists the technology. Without proper literacy, even the best AI hiring tool becomes an expensive paperweight.

How steep is the learning curve?

Cultural resistance creates bigger barriers than technical complexity. 52% of workers use AI quietly, reluctant to admit using AI for important tasks [10]. 53% worry that using AI at work could signal their job might be automated [10].

Role-specific training accelerates adoption more effectively than generic programs [10]. Frontline staff need bite-size tutorials focused on daily tasks. Technical roles require deep-dive labs covering integration and troubleshooting.

The learning curve flattens when people understand AI augments their capabilities rather than replacing their judgment.

What change management resources are provided?

Executive support determines adoption success. Leaders must champion AI usage as a strategic priority and communicate that AI empowers rather than replaces employees [10].

Clear guidelines prevent uncertainty. Define acceptable AI use, data handling policies, and task boundaries upfront [10]. Provide approved AI platforms, responsive support when technical blockers arise, and regular feedback loops to identify what works [10].

Recognition drives adoption more effectively than financial incentives alone [10]. Celebrate teams trying new workflows. Share success stories. Make AI adoption a source of professional growth rather than anxiety.

Vendor Credibility and References

Image Source: Bullhorn

Can you provide client references in our industry?

Industry-specific references reveal whether vendors truly understand your sector's unique challenges. Most companies avoid sharing implementation failures, making reference conversations particularly valuable [43]. Request contacts managing similar hiring volumes, facing identical compliance requirements, and handling comparable technical complexity.

References dealing with your exact regulatory environment or integration challenges provide actionable insights. Generic testimonials from different industries offer little value when evaluating AI hiring tools for your specific context.

What is your client retention rate?

Retention rates signal whether vendors deliver sustained value or create dependency without results. Recruiting firms using AI-driven retention systems reduced client churn by 31% within twelve months while increasing contract renewal value by 18% [6]. Vendors tracking these metrics demonstrate accountability for long-term outcomes.

Active sentiment monitoring resolves client complaints 53% faster than survey-based feedback systems [6]. Monitored firms score 18 points higher on client satisfaction scales [6]. High-health accounts achieve 88% renewal rates versus 54% for accounts showing negative sentiment [6].

If vendors cannot provide retention data, question their commitment to client success.

How do you handle projects that go wrong?

Vendor response to failures separates credible partners from those deflecting responsibility. Purchased solutions consistently outperform internally-built proprietary tools [43]. The best vendors articulate specific remediation processes, guarantee resolution timelines, and offer financial protections when promised outcomes fail.

Ask for examples of failed implementations and recovery strategies. Vendors unwilling to discuss failures likely lack robust support processes when problems arise.

Innovation Roadmap and Responsible AI

How do you approach ethical AI development?

Responsible AI development requires more than good intentions. It demands rigorous application of ethical principles backed by valid data science [12]. The best AI hiring tools maintain validity and reliability by using job-relevant variables exclusively, never training on demographic or biometric inputs, and conducting systematic bias testing [12]. Science-based practices from industrial organizational psychology, machine learning, and statistics create AI that makes hiring both efficient and fair [12].

Ethical frameworks rest on four pillars. Fairness and non-discrimination require algorithms to undergo regular bias testing across demographic groups [44]. Transparency and explainability enable organizations to explain AI recommendations in clear, non-technical terms [44]. Privacy and data protection demand explicit consent, with information used solely for stated purposes [44]. Human oversight ensures AI supports rather than replaces hiring decisions [44].

What is the diversity of your development team?

Development team composition directly affects AI bias detection. Multidisciplinary teams from varied backgrounds provide multiple perspectives, identifying potential bias and ethical concerns before deployment [45]. Diverse voices from different disciplines help companies spot ethical dilemmas early, whether involving bias, privacy violations, or unintended consequences [46].

Teams lacking diversity miss critical blind spots. Homogeneous development groups create AI systems that reflect their limited perspectives, potentially embedding unconscious biases into hiring algorithms.

How do you balance innovation with responsible practices?

AI should empower human decision-making without replacing it entirely [12]. The most effective approach embeds ethics directly into the innovation process by including ethicists, legal experts, and diverse stakeholders from project inception [46]. Addressing ethical concerns during development rather than after deployment prevents costly failures and strengthens AI system foundations [46].

Responsible innovation means saying no to features that compromise fairness. Vendors committed to ethical development prioritize long-term trust over short-term competitive advantages. They build systems that enhance human judgment rather than circumvent it.

Quick Reference Guide: The 47 Essential Questions

This comprehensive breakdown organizes every critical question across the key evaluation areas. Use this reference to systematically assess vendors and identify gaps in their responses.

Category | Focus Area | Core Questions | Proven Results | What to Watch For | Leading Tools |

Strategic Foundation | Business alignment and stakeholder buy-in | What specific hiring problem does this solve? How does it align with our strategy? Who needs to be involved? | 35% higher profitability; 27% better ROI; 14% improved interview success; 30% cost reduction per hire | Define clear objectives; Engage HR, compliance, and affected stakeholders; Quantify current pain points | Industry-agnostic |

AI Technology Core | Understanding the underlying models | What ML models power this? Is it predictive, prescriptive, or generative? What scientific methods are used? | Varies by implementation | Supervised learning for labeled data; Unsupervised for pattern discovery; Reinforcement for optimization; Deep learning complexity | Platform-dependent |

Data Foundation | Training sources and quality | What data trains the models? First-party or third-party sources? How often is data refreshed? | Better accuracy with first-party data | First-party: owned, controlled, accurate; Third-party: broader but less precise; Update frequency affects relevance | Resume databases, ATS history, LinkedIn, GitHub |

Performance Validation | Accuracy and real-world results | What is the predictive accuracy rate? Are there peer-reviewed studies? How does performance vary by role? | 30% satisfaction boost; 25% turnover reduction; 20% retention improvement; 78% better decisions; 25% advantage over manual screening | 42% of companies use AI screening; Evidence quality varies; Performance depends on role complexity | L'Oreal case study |

Technical Integration | System compatibility and workflows | What are the technical requirements? How does it connect to existing systems? Are workflows automated? | 40% faster implementation with proper planning | Deep ATS integration; API-first architecture; Native integrations beat third-party; Bidirectional data flow essential | ATS-dependent |

Bias Prevention | Fairness testing and monitoring | What bias tests are conducted and how often? Can you explain fairness metrics? How is drift monitored? | 30% diversity increase; 40% bias reduction with proper controls | Counterfactual and consistency testing; Four-Fifths Rule compliance; Statistical parity; Continuous monitoring required | Methodology-specific |

Discrimination Safeguards | Protected attributes and proxy detection | Does the tool avoid protected characteristics? How are proxy variables identified? What prevents discriminatory outcomes? | Risk mitigation essential | ZIP codes, graduation dates, names as proxies; Paired testing detects bias; Explainable scoring required | Amazon's failed screener example |

Regulatory Compliance | Legal requirements and certifications | What certifications do you hold? How do you handle international regulations? Are compliance features built-in? | €35M maximum EU fines | SOC 2, ISO 27001, GDPR required; EU AI Act (Aug 2024); US state laws 2025-2026; Built-in monitoring essential | MokaHR, SAP SuccessFactors |

Security Architecture | Data protection and encryption | What encryption standards are used? How is PII protected? What audits have been completed? | 46% of breaches involve PII; $169 average cost per record | TLS 1.2+ transport; AES-256 storage; Multi-factor authentication; Tokenization over redaction | Grayscale AWS model |

Candidate Experience | Journey design and accessibility | What does the candidate journey look like? Is the assessment accessible? How does it impact employer brand? | 37% use AI for company research | Self-serve scheduling; Timeline transparency; AI/human choice; Accessibility compliance; Trust is critical | User experience varies |

Customization Control | Assessment tailoring and oversight | How customizable are criteria? Can we create job-specific models? What control do we maintain? | Control determines success | Define competencies first; Explicit rubrics required; Human-in-the-loop essential; Explainable decisions | Dover, Workable, CLARA, Vervoe, Manatal, Eightfold, C-Factor AI |

Global Scalability | Multi-location and language support | Does it support global hiring? Can it handle different languages? How adaptable for various roles? | 73% expect 50%+ international hires by 2026; 7% opportunity loss from language gaps | Spanish, Vietnamese, Mandarin critical in key markets; 24/7 native language support; Consistent evaluation across regions | Market-dependent |

Analytics Intelligence | Insights and decision support | What real-time insights are available? Can we identify bottlenecks? How actionable is reporting? | 82% use analytics; 79% still use spreadsheets; 30-40% drop-off between interviews | Live dashboards replace manual reporting; Predictive analytics; Anomaly alerts; Stage-by-stage analysis | MokaHR, JobTalk |

Implementation Speed | Deployment timeline and obstacles | How quickly can we go live? What is the typical timeline? What blocks implementation? | 40% faster with proper planning; 30-35% quicker onboarding | Traditional: 45-60 days per hire; AI: 7-12 months to impact; Data integration is primary bottleneck | Phenom methodology |

Partnership Model | Support structure and relationship | What does customer success look like? Are there dedicated managers? How responsive is support? | Relationship quality affects outcomes | Quarterly reviews; Ongoing training; Dedicated contacts; Response time by severity; Multiple channels | Vendor-specific |

Financial Structure | Pricing and return calculation | What are the costs? How do we calculate ROI? What savings have others achieved? | 27% cost reduction; 70% faster hiring; 33+ hours saved weekly; 3-10× ROI typical | $15-$35,000+ monthly range; Budget 50-75% extra for hidden costs; Cost per screened applicant best metric | AltHire AI, Senseloaf |

Team Readiness | Skills and change management | What skills do we need? How steep is the learning curve? What change resources are provided? | 70% of failures are human factors; 52% use AI quietly | AI literacy: Awareness, Application, Adaptability, Accountability; Role-specific training; Executive championing | Organization-dependent |

Vendor Validation | Track record and client satisfaction | Can you provide industry references? What is retention rate? How do you handle failures? | 31% churn reduction; 88% renewal for high-performing accounts vs 54% for low | Request similar complexity references; Retention signals value; Transparent failure recovery; Purchased beats proprietary | Reference-dependent |

Ethical Development | Responsible AI and innovation | How do you approach ethical development? What team diversity exists? How do you balance innovation with responsibility? | Long-term sustainability critical | Job-relevant variables only; No demographic training; Regular bias testing; Transparent algorithms; Human oversight | Development team dependent |

Conclusion

Choosing the right AI hiring tool requires asking targeted questions across strategic alignment, technical capabilities, fairness testing, compliance, and ROI metrics. Organizations that rush implementation without addressing these 47 questions risk wasted budgets and failed deployments. The vendors worth partnering with provide transparent answers about their models, bias testing protocols, security certifications, and client outcomes.

Start by defining specific hiring challenges and quantifying current pain points. Then evaluate vendors systematically across technical integration, regulatory compliance, and team readiness. The best AI hiring decisions balance innovation with accountability, automation with human oversight, and efficiency with fairness. Take one category at a time, and the right solution will emerge clearly.

FAQs

Q1. What are the most important factors to consider when choosing an AI hiring tool? When selecting an AI hiring tool, prioritize strategic alignment with your hiring challenges, technical integration capabilities with your existing systems, fairness testing and bias mitigation protocols, regulatory compliance certifications, and measurable ROI. Organizations should also evaluate the tool's predictive accuracy, data privacy measures, candidate experience impact, and vendor support structure to ensure the solution delivers sustained value beyond initial implementation.

Q2. How can organizations ensure AI hiring tools don't introduce bias into recruitment processes? Organizations should verify that vendors conduct regular bias testing using methods like counterfactual testing, consistency testing, and the Four-Fifths Rule. The tool should avoid using protected characteristics and identify proxy variables that correlate with demographic attributes. Implementing human-in-the-loop evaluation, maintaining explainable scoring systems, and conducting continuous monitoring of outcomes across demographic groups helps prevent discriminatory results and ensures fairness throughout the hiring process.

Q3. What kind of ROI can companies expect from implementing AI hiring tools? Companies typically see a 27% reduction in cost-per-hire, 30% increase in employee satisfaction, and 25% reduction in turnover within the first year. AI tools can reduce resume review time by up to 90%, save recruiters 33+ hours per week, and achieve 40% faster shortlisting. A good ROI for recruiting software ranges from 3× to 10× in year one, though organizations should budget an additional 50-75% beyond vendor quotes to cover implementation, integration, and support costs.

Q4. How long does it typically take to implement an AI hiring tool and see results? AI hiring tool deployments typically require 7 to 12 months to move from pilot to meaningful impact. Organizations using phased rollouts see better results: months 1-3 focus on foundation elements like resume screening, months 4-8 introduce assessment automation, and months 9-12 incorporate advanced features like predictive analytics. Companies performing thorough pre-implementation analysis report 30-35% faster team onboarding and can achieve up to 40% faster shortlisting once fully deployed.

Q5. What compliance requirements should AI hiring tools meet in 2026? AI hiring tools must comply with multiple regulatory frameworks including the EU AI Act (effective August 2024) with fines up to €35 million, California regulations (October 2025), Illinois amendments (January 2026), and Colorado's AI Act (February 2026). Vendors should hold SOC 2 and ISO 27001 certifications, ensure GDPR compliance for European candidates, conduct regular impact assessments, and provide built-in compliance monitoring features. Organizations must verify that tools support human oversight and maintain audit trails for all AI-driven decisions.

References

[1] - https://getclara.io/blog/10-questions-to-ask-before-choosing-an-ai-hiring-tool

[2] - https://www.cangrade.com/blog/talent-acquisition/your-ai-hiring-tool-evaluation-checklist-2026-guide-for-hr-talent-leaders/

[3] - https://dev.to/hemangjoshi37a/the-enterprise-ai-buyers-checklist-12-questions-to-ask-before-hiring-an-ai-consultancy-oai

[4] - https://www.ibm.com/think/topics/ai-in-recruitment

[5] - https://www.bristowholland.com/insights/thought-leadership/10-ai-recruitment-strategies-to-enhance-your-hiring-process/

[6] - https://pmc.ncbi.nlm.nih.gov/articles/PMC12163046/

[7] - https://www.sciencedirect.com/science/article/pii/S266672152200028X

[8] - https://www.ironhack.com/us/blog/ai-in-recruitment-how-machine-learning-is-shaping-the-future-of-hiring

[9] - https://www.celential.ai/blog/machine-learning-in-recruitment/

[10] - https://www.herohunt.ai/blog/machine-learning-in-recruitment-a-deep-dive/

[11] - https://www.redhat.com/en/topics/ai/predictive-ai-vs-generative-ai

[12] - https://pmc.ncbi.nlm.nih.gov/articles/PMC9516509/

[13] - https://www.forbes.com/sites/bernardmarr/2024/10/15/the-ai-revolution-how-predictive-prescriptive-and-generative-ai-are-reshaping-our-world/

[14] - https://bernardmarr.com/generative-predictive-prescriptive-ai-what-they-mean-for-business-applications/

[15] - https://www.ibm.com/think/topics/generative-ai-vs-predictive-ai-whats-the-difference

[16] - https://everworker.ai/blog/ai_recruiting_data_fair_fast_compliant_hiring

[17] - https://amperity.com/blog/first-party-vs-third-party-data-what-marketers-need-to-know-in-2026

[18] - https://www.plan-d.com/en/faq/how-often-does-an-ai-model-need-to-be-retrained

[19] - https://www.mokahr.io/myblog/ai-recruiting-accuracy-hiring-results/

[20] - https://www.bbc.com/worklife/article/20240214-ai-recruiting-hiring-software-bias-discrimination

[21] - https://www.seekout.com/blog/add-ai-to-hiring-tech-stack/

[22] - https://blog.iqtalent.com/building-ai-recruiting-technology-stack

[23] - https://www.gem.com/blog/recruitment-automation-tools

[24] - https://www.atlassian.com/agile/project-management/workflow-automation-software

[25] - https://x0pa.com/blog/ai-bias-in-recruitment-metrics-for-detection/

[26] - https://www.warden-ai.com/resources/ai-fairness-testing-metrics-methods

[27] - https://www.evidentlyai.com/ml-in-production/data-drift

[28] - https://peoplemanagingpeople.com/hr-operations/ai-drift/

[29] - https://www.coblentzlaw.com/news/new-california-regulations-regarding-ai-use-in-hiring-and-employment/

[30] - https://www.heymilo.ai/blog/ai-hiring-bias-detection-prevent-unfair-hiring

[31] - https://www.goperfect.com/blog/how-to-reduce-bias-in-ai-powered-candidate-selection-a-2026-guide-for-recruiting-teams

[32] - https://www.aclu.org/news/racial-justice/how-artificial-intelligence-might-prevent-you-from-getting-hired

[33] - https://journals.law.unc.edu/nccivilrightslaw/2025/01/ai-and-hiring-discrimination-the-impact-artificial-intelligence-hiring-tools-will-have-on-companies/

[34] - https://www.warden-ai.com/resources/proxy-discrimination-ai

[35] - https://www.mokahr.io/articles/en/the-best-ai-recruitment-compliance-software

[36] - https://www.potomaclaw.com/news-The-Global-Compliance-Challenge-of-AI-Recruiting

[37] - https://everworker.ai/blog/ai_recruitment_candidate_data_security_compliance

[38] - https://ceriumnetworks.com/ensuring-safe-secure-ai-adoption-a-guide-for-hr-professionals/

[39] - https://grayscaleapp.com/ai-data-security-and-the-hiring-platforms-you-can-trust/

[40] - https://witness.ai/blog/ai-security-platform/

[41] - https://redactor.ai/blog/pii-redaction-software-guide

[42] - https://builtin.com/artificial-intelligence/ai-employer-brand-intelligence

[43] - https://sapia.ai/resources/blog/ai-diversity-recruiting/

[44] - https://www.forbes.com/councils/forbesbusinesscouncil/2025/06/18/using-ai-in-hiring-to-elevate-the-candidate-journey/

[45] - https://www.pageexecutive.com/recruitment-expertise/executive-insights/hiring-process-more-inclusive-with-ai

[46] - https://www.wecreateproblems.com/blog/accessibility-in-ai-hiring

[47] - https://revelmarketing.com/blog/how-ai-changed-employer-brand-strategy-and-what-to-do-about-it/

[48] - https://www.testgorilla.com/blog/candidate-journey-age-of-ai/

[49] - https://www.cio.com/article/4160432/ai-is-scoring-your-job-candidates-can-you-explain-how.html

[50] - https://www.dover.com/blog/best-application-scoring-tools-for-recruiters-(october-2025-update)

[51] - https://getclara.io/features/customizable-ai

[52] - https://vervoe.com/ai-assessment-tools/

[53] - https://thetalentgames.com/ai-assessment-platforms-for-recruiters/

[54] - https://www.heymilo.ai/blog/interview-globally-on-autopilot-with-heymilos-multilingual-support

[55] - https://www.leaderonomics.com/articles/business/how-to-recruit-multilingual-hourly-workers-with-AI-chatbots

[56] - https://www.carv.com/blog/multi-language-recruiting-with-ai

[57] - https://avahi.ai/blog/how-to-handle-multilingual-candidate-interviews-with-ai-support/

[58] - https://www.jobvite.com/blog/from-spreadsheets-to-strategy-how-jobvites-ai-powered-dashboards-turn-recruiting-data-into-business-impact/

[59] - https://www.mokahr.io/articles/en/the-best-real-time-hiring-dashboards

[60] - https://www.jobtalk.ai/features/analytics

[61] - https://www.linkedin.com/top-content/recruitment-hr/optimizing-recruitment-processes/identifying-candidate-drop-off-points-in-hiring/

[62] - https://www.slideshare.net/slideshow/rapid-ai-workforce-deployment-strategy-scale-ai-teams-in-7-days/286456233

[63] - https://www.unframe.ai/blog/ai-agent-deployment-6-months-vs-days

[64] - https://www.stardex.com/blog/ats-tool-deployment-and-ai-recruitment-software-for-executive-search

[65] - https://equip.co/resources/ai-in-hiring-stages-and-applications/

[66] - https://hootrecruit.com/blog/ai-implementation-pitfalls-recruitment/

[67] - https://www.linkedin.com/pulse/5-steps-build-ai-hiring-roadmap-recruitmilitary-jnjgc

[68] - https://www.hiretruffle.com/blog/ai-recruiting-software-pricing-guide

[69] - https://althire.ai/feeds/blog/ai-recruiting-software-pricing-2026

[70] - https://everworker.ai/blog/ai_recruitment_tool_roi_calculation_playbook

[71] - https://www.senseloaf.ai/blog-articles/how-ai-in-recruitment-reduces-cost-per-hire

[72] - https://www.worklytics.co/blog/how-to-accelerate-the-ai-learning-curve-in-your-organization

[73] - https://www.fisherphillips.com/en/insights/insights/what-ai-skills-should-hiring-employers-look-for

[74] - https://fortune.com/2025/08/18/mit-report-95-percent-generative-ai-pilots-at-companies-failing-cfo/

[75] - https://arete.so/blog/ai-customer-retention-recruiting-firms

[76] - https://www.phenom.com/ai-ethics

[77] - https://blog.iqtalent.com/ethical-ai-recruiting-best-practices-framework

[78] - https://www.linkedin.com/pulse/balancing-innovation-integrity-ethical-ai-hiring-ann-bedford-flood-0rtbf

[79] - https://celestialsys.com/blogs/ethical-ai-balancing-innovation-with-responsibility/